Getting Started with vRA 8 Terraform Runtime Integration

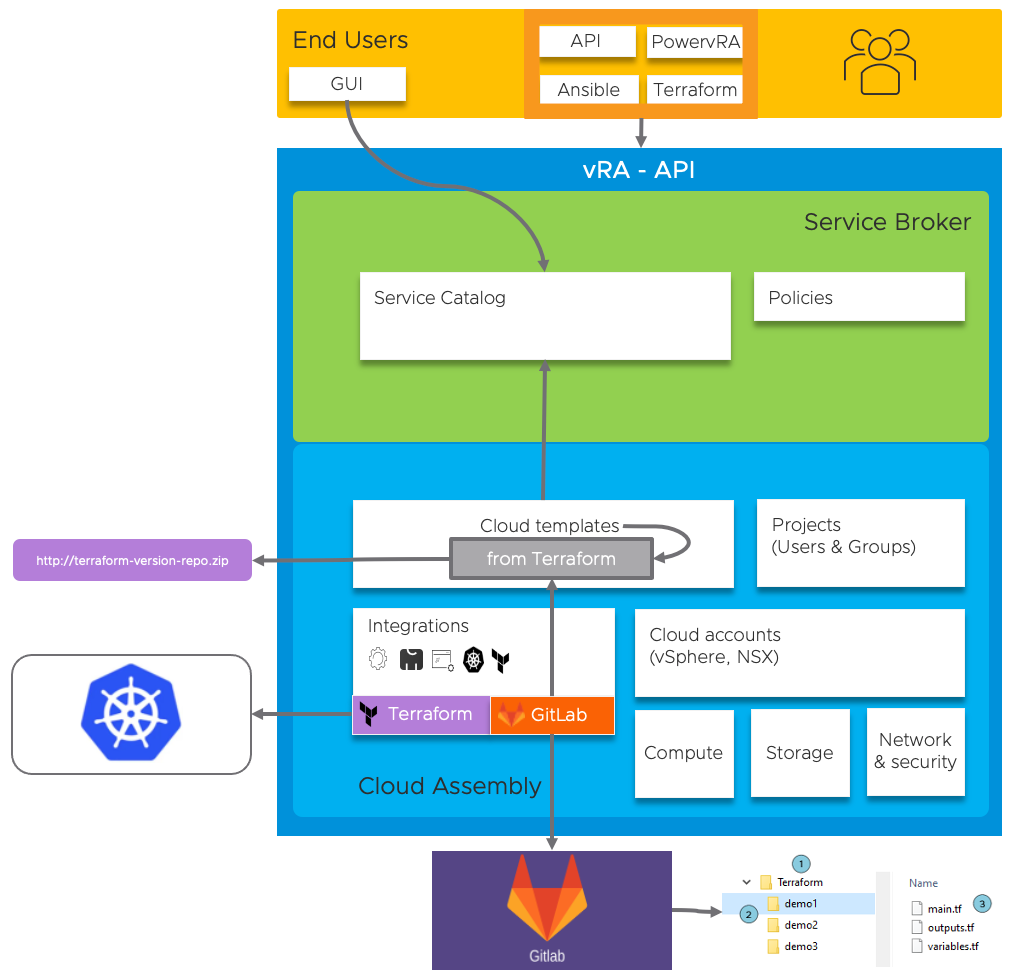

Terraform is gaining a lot of traction in the Infrastructure-as-code space, both from a end-user perspective as within integrations. Let me try to explain what each of these bring with vRA 8!

Terraform as end-user

For configuring vRA related constructs as Cloud-accounts, Projects, Users, Groups, Cloud-Templates

For triggering Cloud-Template deployments

Leverages local Terraform executable and provider (vSphere, vRA, NSX, …)

Terraform as integration

For consuming existing Terraform configuration files in Cloud-Templates and presenting them as a Service Catalog item (XaaS, Day-2 actions)

Leverages an external K8S-cluster to run the Terraform Pod and GitLab to retrieve the configuration files

This drawing visualizes the above:

In this blog example I will provide all steps to complete the Terraform as integration and how to create a simple XaaS-Create-VMfolder Cloud Template.

Prerequisites

- We need a Managed or External Kubernetes Cluster with a pre-created namespace to run the terraform container

- GitLab integration for the retrieval of terraform configuration files (main.tf, variable.tf)

- vRA8 deployment with Enterprise license for Managed Kubernetes support, Advanced license for external kubeconfig

Kubernetes Clusters

There are three primary options to working with Kubernetes resources in vRealize Automation Cloud Assembly.

- Managed Cluster: You can integrate VMware Tanzu Kubernetes Grid Integrated Edition (TKGI), formerly PKS, or Red Hat OpenShift with vRealize Automation Cloud Assembly to configure, manage and deploy Kubernetes resources on these platforms

- vSphere with Tanzu: You can leverage a vCenter cloud account to access supervisor namespaces Kubernetes-based functionality.

- External: Finally we can integrate external Kubernetes resources in vRealize Automation Cloud Assembly. For this option we need the address:port, certificate and credentials (username/password, certificate, baerer-token).

For Terraform integration we support the following runtime types:

- Managed Cluster

- External kubeconfig

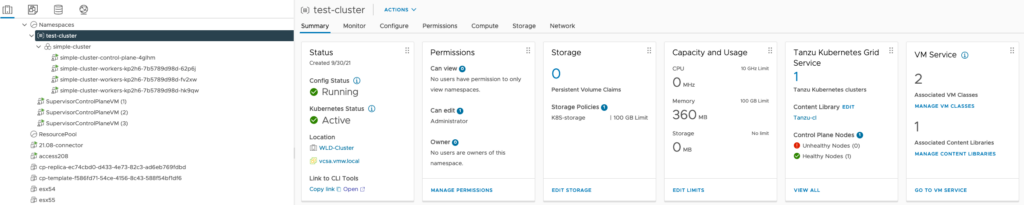

In this example we will be leveraging External Kubeconfig pointing to a Tanzu guest-cluster.

GitLab integration

To provide and maintain Terraform configurations we require a GitLab/GitHub/Bitbucket repository for source-control. This is also an integration we create and configure within vRA8.

In this example we will be using a GitLab repository.

Step-by-step guide

Prepare Kubernetes Cluster

For this demo I used my already available vSphere with Tanzu environment, created a new ‘vra’ namespace within the guest-cluster and made sure the role-bindings were setup correctly.

# kubectl get ns vra

NAME STATUS AGE

vra Active 5d13hPrepare GitLab

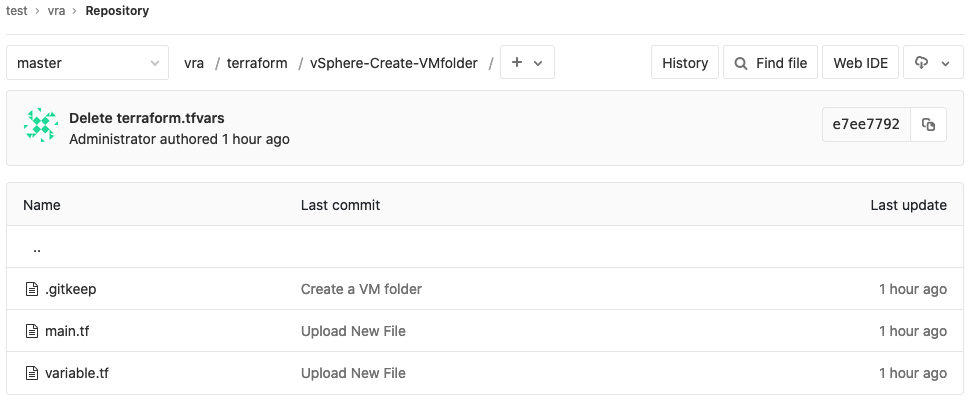

My local GitLab instance was configured with the, for vRA necessary, sub-folder structure as shown below.

The terraform configuration contains the following code:

main.tf:

provider "vsphere" {

user = var.vsphere_user

password = var.vsphere_password

vsphere_server = var.vsphere_server

# If you have a self-signed cert

allow_unverified_ssl = true

}

data "vsphere_datacenter" "dc" {}

resource "vsphere_folder" "folder" {

path = var.folder_name

type = "vm"

datacenter_id = "${data.vsphere_datacenter.dc.id}"

}

variable.tf:

variable "vsphere_user" {}

variable "vsphere_password" {}

variable "vsphere_server" {}

variable "folder_name" {}

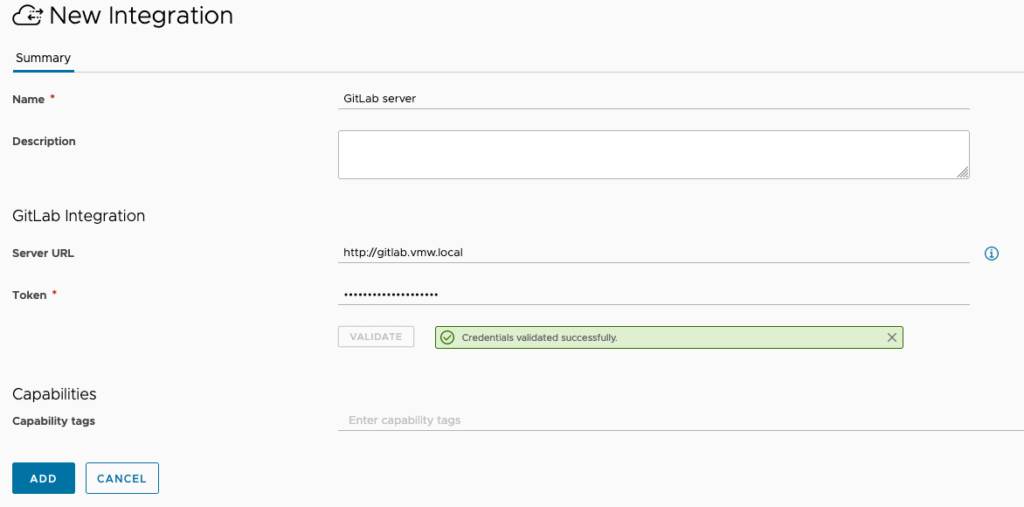

Set up GitLab integration

Use your GitLab address and personal access token to set up the integration:

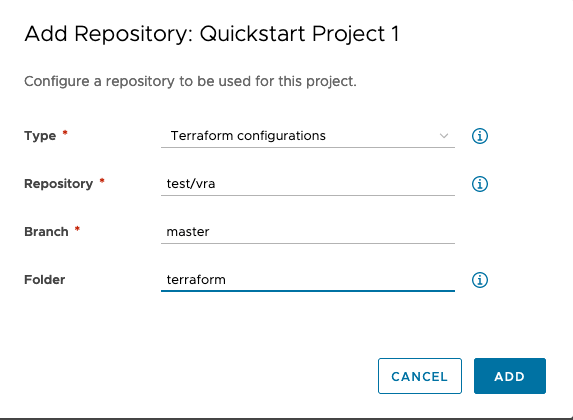

After adding the integration we can assign it to a project and configure the repository:

Set Up Terraform integration

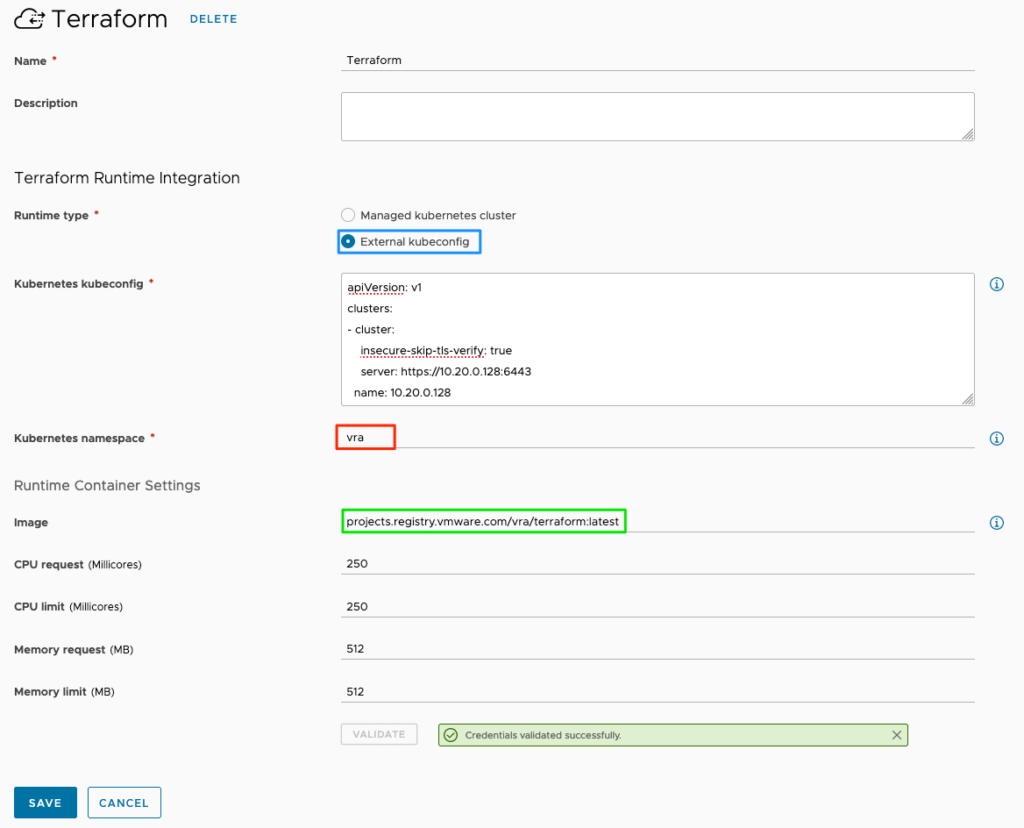

For this example we will use the external kubeconfig from my tanzu-cluster to set up the integration. Be sure to reference an exisiting namespace on your kubernetes cluster!

Note: this example references a public terraform-image! If needed, you can use your local registry.

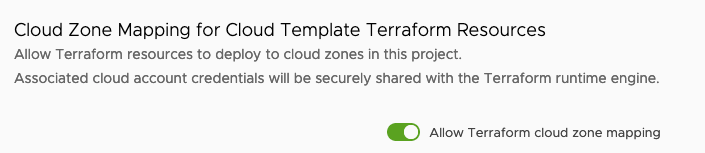

Finally, enable Cloud Zone Mapping for Terraform within the project provisioning properties:

This concludes the configuration part of using Terraform and GitLab within your project. Next up is creating our example Cloud Template and consume the available integrations.

Example Cloud Template using Terraform

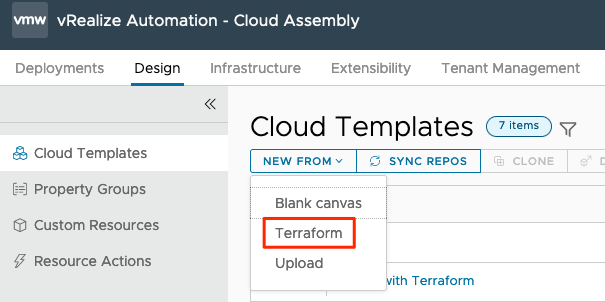

This XaaS-example uses the New From>Terraform Cloud Template feature:

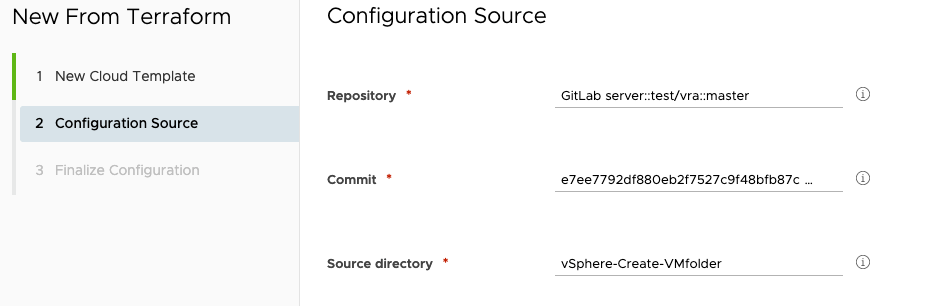

Define a name and select your Terraform enabled project. Next, select your repository, commit (auto-selected) and Source folder:

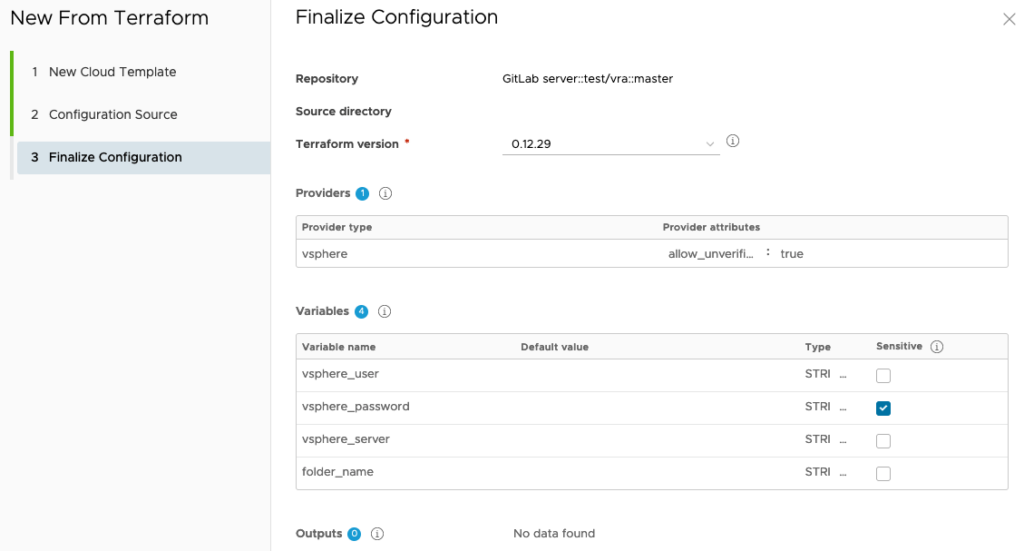

And as a latest step you can edit the terraform properties that were read from the repository terraform files. These will end-up in the resulting Cloud Template yaml-code:

Note: the latest terraform version will be used by default. You can override, or even update, the version used (please keep in mind the latest supported Terraform version is 0.14.11)!

Resulting code is as follows (which you can edit to your needs):

inputs:

vsphere_user:

type: string

vsphere_password:

type: string

encrypted: true

vsphere_server:

type: string

folder_name:

type: string

resources:

terraform:

type: Cloud.Terraform.Configuration

properties:

variables:

vsphere_user: '${input.vsphere_user}'

vsphere_password: '${input.vsphere_password}'

vsphere_server: '${input.vsphere_server}'

folder_name: '${input.folder_name}'

providers:

- name: vsphere

# List of available cloud zones: vcsamgmt.vmw.local/Datacenter:datacenter-2

cloudZone: 'vcsamgmt.vmw.local/Datacenter:datacenter-2'

terraformVersion: 0.12.29

configurationSource:

repositoryId: f0ba06da-c960-4788-aa84-77cbbaad5124

commitId: e7ee7792df880eb2f7527c9f48bfb87caca8a6f1

sourceDirectory: vSphere-Create-VMfolder

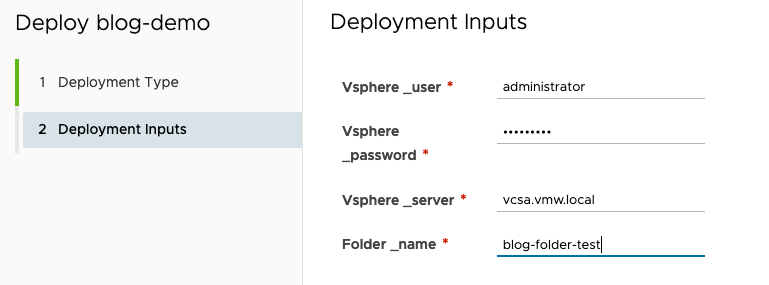

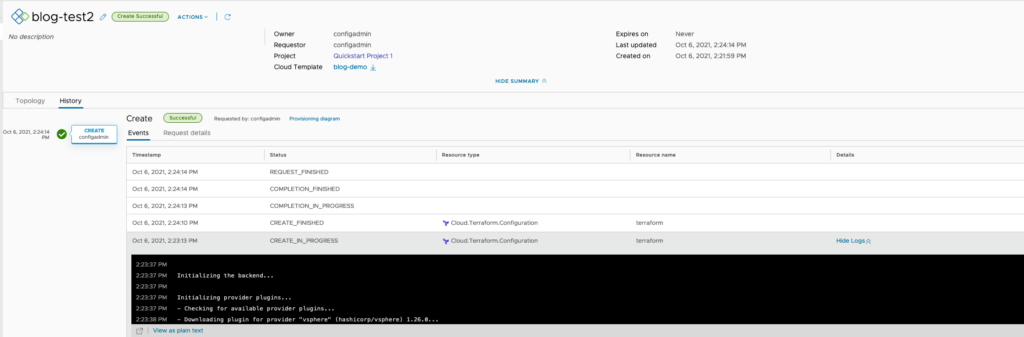

We can now deploy and monitor the results:

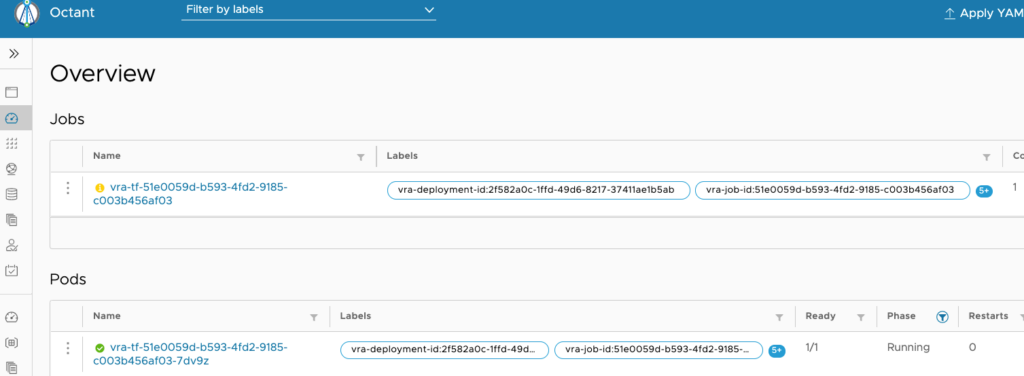

On our kubernetes cluster we see the terraform Job and Pod spinning up!

In the Job details we notice the command-line as used to execute the terraform apply-job:

In vRA we also see more details to check:

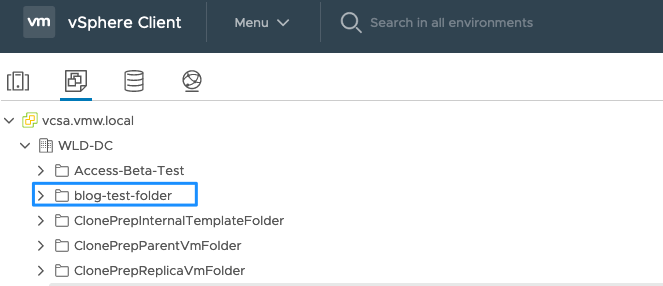

And of course, the final result in vCenter:

Thin finalizes this blog about Terraform integration in vRA8!

1 Response